Welcome back to Green Screen, where Grist writers break out their inner film buffs to talk movies, television, video games, and any other heretofore undiscovered screen-based media forms. This week, our star team turn to the newly released Ex Machina, from writer and director Alex Garland.

*Be warned: Spoilers below!*

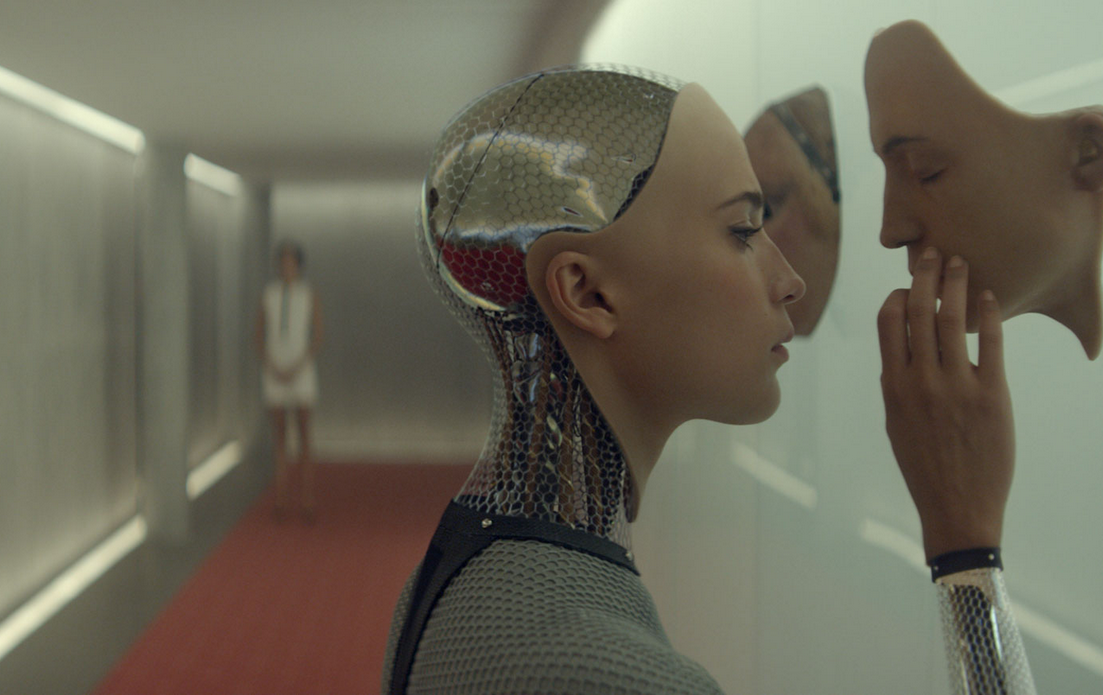

The Basics: Nathan, an obnoxious wealthy tech entrepreneur, has a secret lair in the middle of a vast untrammeled wilderness where he builds a series of increasingly intelligent humanoid robots. The most recent incarnation is named Ava, in what can only be a nod to other proto-female, Eve.

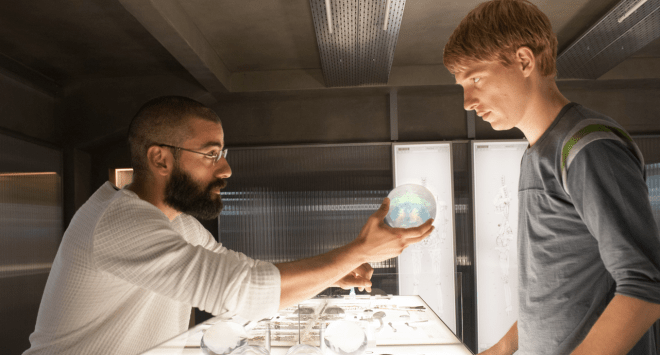

The story gets going when Ava meets Caleb, the freckled naif who works in Nathan’s company Bluebook (a thinly veiled fictionalization of Google). Caleb has been brought to Nathan’s underground lab to test Ava for signs of true consciousness, Turing-style, which means this movie is basically one huge mind game.

Why it’s green: The story touches on myriad concerns from our current moment in paranoia — online privacy, the dehumanizing aspects of technological interactions, beards — and ultimately addresses what all these things have in common: power. Ex Machina explores, quite sordidly at times, what power really means: Who gets to have it, what does it do to the powerful and the disempowered, and what happens when it is taken away. As humans, we’re used to calling the shots in the modern world — but what if the tables were turned? Whether it’s the singularity or runaway global warming, humanity faces some serious threats in the coming decades — and Ex Machina doesn’t imply that we’re very equipped to deal with them.

Eve: First of all, I do love a story in which narcissistic techie men are forced to meet their maker, so to speak, and while this is definitely that, it did not leave me feeling good in any way.

Amelia: Bad. It felt bad. No matter whose side you’re on in this story, you come away from it feeling a little queasy. On the one hand, you are rooting for Nathan’s downfall from his first, sweaty line: “Dude!” (Or was that just me?) On the other hand, being quietly and competently maimed by your personal sentient sexbots has got to be a rough way to go. And do-gooder Caleb Smith maybe gets it worst of all, left behind by the cute killer robot he was planning to whisk away on his proverbial white stallion. I wonder what hurt more, being disabused of his male savior fantasies or slowly starving to death in complete and utter solitude?

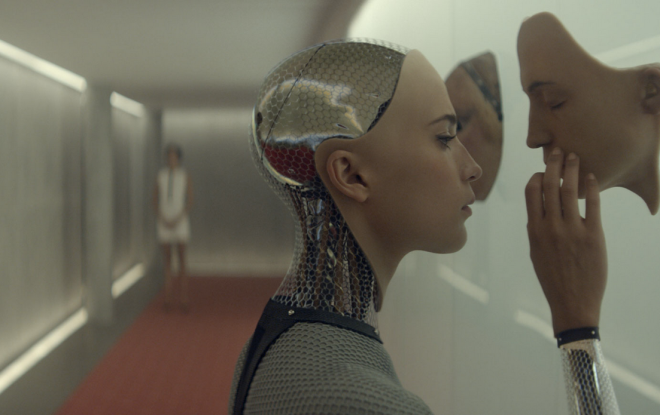

Eve: Wow, Amelia — you cold-hearted, girl. But you have a point: The theme of the movie is controlling women, and keeping them safe and sound inside where they can’t enjoy sunshine or sky or spontaneous interactions with humans, and also where they cannot murder anyone, very elegantly, with a sushi knife. Spoiler? I’m sorry.

Film4/Universal Pictures

Ana Sofia: In AI movies, I just can’t understand how these science-y dudes just blindly expect their creations to love them. That has never clicked for me.

Eve: Writer-director Alex Garland was around for a Q&A after the screening, which was nice, because we got to get a little insight into exactly how goddamn bleak he thought the film’s ending was. Answer: Not bleak at all! Very uplifting! Alright, Garland – I mean, again, I love a storyline about women’s empowerment, but when that empowerment has to come about through cold-wired murder, that’s pretty dark.

It bears mentioning that Ava’s cruel intelligence, derived entirely from the search histories of Bluebook’s billions of users, is essentially the ultimate accomplishment in crowd-sourcing.

Suzanne: This film is timelier than one may think: There’s a pretty hot debate going on right now in the tech world about how freaked out we should be regarding AI taking over the world. Some say it’s not a threat (or at least not in the foreseeable future), but others, including Stephen Hawking and Elon Musk, are genuinely concerned. Nick Bostrom, a philosopher and director of the Future of Humanity Institute at the University of Oxford, is with Elon and Steve. Last year, he published a book called Superintelligence about the potential dangers of AI.

He’s so concerned, in fact, that when a reporter from MIT Technology Review asked Bostrom about climate change, Bostrom was like, “Climate change? Ain’t no thang.” Well, not in so many words — but basically, Bostrom thinks that the existential threat to which we here at Grist devote our lives is only going to make the climate in some places “a bit more unfavorable.” Sounds like fear of the singularity has made someone delusional!

Film4/Universal Pictures

Amelia: That reminds me of an insight Garland gave after the movie, about Ava being able to feel “selective empathy.” What looks like an inhuman move — to leave clueless Caleb locked in his glass box — is actually a thing we all do (though hopefully we don’t take it to the sociopathic extremes that Ava achieved). We all decide who and what to care about, and how much, every day. That’s why environmentalists are often accused of caring more about polar bears than about people – and that’s why someone like Nathan can build his little fortress of solitude while pillaging everyone else’s privacy for data. Nothing matters to him more than his robo-harem — which is also his downfall.

Suzanne: About that fortress of solitude: It’s in the middle of what appears to be paradise – beautiful mountains, pristine glaciers, lush forests etc. And while Nathan and Caleb do venture out for a hike at one point, they mostly stick to the house (which Nathan prefers to call a research facility, because he wants people to roll their eyes at him). Nathan can access nature at his leisure, but he can also pretend it’s not there. In fact, when Nathan first shows Caleb to his bedroom, he acknowledges the lack of windows — a necessity of design, because the walls are chock full of fiber optic cables. Technology is literally blinding them from the natural world!

Ana Sofia: For AIs, nature is kept at an arm’s length — because they’re not “natural” beings, maybe? They don’t deserve it? And yet they still long, like most humans, to go outside to explore and learn. Whereas Nathan, whose research facility is nestled in a beautiful forest wonderland, spends all his time playing god with computer programs in his basement lair and getting blackout wasted.

Suzanne: I’m personally a big fan of technology, but people are the worst, and they abuse the shit out of it. That’s when we end up doing silly things like changing Earth’s climate — and to compound that damage further, technology and our built environment allow us to ignore everything we do to alter the natural world. So, really: Aren’t we all just Caleb standing in a dark, depressing room covered in fiber optic cables that shield us from what we’re doing to the planet?

Film4/Universal Pictures

Eve: That is bleak! But by far the saddest moment of the whole film, for me, was the clear nod to Vladimir Nabokov’s alleged inspiration for Lolita: a chimp, given the tools to draw, made a picture of the bars of its cage. The first “real life object” that Ava, who usually spends her time making intricate pencil sketches of Mac screensavers, draws is a shadowy depiction of the window in her room.

Amelia: Can we talk a little bit about that dance scene?

Eve: Please, no. But, considering the impressive synchronicity of that choreography, one wonders exactly how many times they had had to practice that number, over and over again, in a hellish disco Groundhog Day.

Amelia: But when Nathan and Kyoko (b.k.a. Proto-Ava, Mute Edition) break out a truly sublime synchronized boogie, I felt a confusing mix of absolute horror and, well, a chuckle. It was funny! And Garland, post-screening, pointed out that for a glum story like this one, humor is actually a kind of naturalism: “It’s oxygen!”

Eve: [Insert pitch for Grist.org: “We Love Laughin’ Bout Climate Change” here. Hi, funders!]

Ana Sofia: Ultimately, as Garland pointed out, one’s interpretation of the film is about fear vs. hope. I like to think that at Grist, we’re fighting for the latter team — and just crossing our fingers that we don’t piss off the wrong robots along the way.

Suzanne: Word.