This is an introduction to how we build our web products here at Grist.org. I hope you find it interesting whether you work at Grist, are just curious, or want to decipher some of the jargon involved.

1. Good ideas

People have ideas for features and changes on grist.org all the time, which is awesome. All of these are evaluated and if possible merged into the overall product plan by the product owners (currently Scott, with some help.) The product owners are people who are responsible for the overall direction of grist.org. However, good ideas come from everywhere around Grist — if you have one, draw it out on a piece of paper, write about it on Basecamp or in an email … let people know about it, refine it and sell it (i.e. — let everyone know how it will help us advance toward our goals — growth, engagement, influence and impact.)

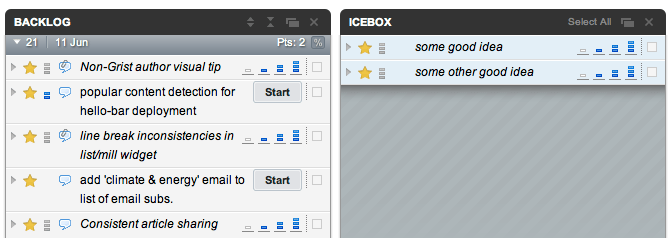

Once an idea is sufficiently well defined it becomes a feature request, and is entered into pivotal tracker, our project management software in a place called the icebox. The product owners regularly review the contents of the icebox and the overall product roadmap, promoting those ideas that we decide need to be done into the product backlog.

The feature backlog and icebox in pivotal tracker. The icebox contains stuff we may do, while the backlog contains stuff we will do.

2. Design

The backlog is an ordered list. We do the stuff at the top first, and the stuff at the bottom last. The order of the backlog may be changed by the product owners frequently depending on changing goals. When a feature starts moving up the list, it enters a design phase where designers, developers and stakeholders work out all of the various details — how the feature should behave, perform and look. All of the what-if’s are contemplated, requirements are written down to the extent needed, sometimes there are wireframes, visual designs undertaken … Design happens before, during and after a feature is implemented. Sometimes, the design isn’t really formed until a prototype is implemented, which means that this phase can overlap heavily with the next phase which is …

3. Implementation

We build our software in 2-week chunks called sprints. At the beginning of each sprint, we commit to the top slice of the product backlog, declare it the “current sprint” and give it an entertaining name. Our commitment to everyone at Grist is that we’ll have this list of features done and live on Grist.org in two weeks. We also communicate clearly at the beginning and end of the sprint about the status of all of these features, and any adjustments that have been necessary. Sometimes priorities change mid-sprint, in which case we will usually horse-trade: we add new tasks if we subtract stuff that we were originally going to do.

During implementation, we have a stand-up each day. At this short meeting, developers self-organize around the current sprint, committing to new work for the day, and reporting on yesterday’s progress. More concretely, developers commit to tasks in pivotal tracker, and put their names on them. At the next standup, they’ll report on how things went with that task.

part of a current sprint, showing tasks in various states

After a developer has finished work on a ticket in a special development environment, they tell pivotal tracker that they are done with it by marking it as finished. Now the feature is ready to be tested. No one tests their own code, so another developer is then deputized to test the feature. Usually this means a combination of functional and browser testing, but may mean other things too. Before testing however, the feature must be delivered. Features are delivered when they are committed to our code repository.

A quick list of pivotal tracker states:

- tasks that have not started have a gray background

- tasks that have been started are yellow, and will indicate their next possible state via a button. (you can also open the ticket and see the state)

- accepted tasks have a green background

- deployed tasks have a green background and are tagged with the word “deployed”

There is also a physical manifestation of the current sprint in the office. It’s on the wall near the tech area — you’ve probably noticed our raft of sticky notes etc. This board represents the current sprint and mirrors pivotal tracker.

3a. Nerd Interlude

(read this if you want but feel free to skip.)

We employ the following general philosophy regarding versioning/repos: We maintain a clean daily svn trunk. That means we don’t commit code that is broken, and we isolate large or difficult-to-implement features in branches, and then merge them back into the trunk when testing is complete, after an integration test. Furthermore, the trunk must be fully tested and clean by 11pm each night, at which point it is tagged, and eligible for deployment. (end interlude)

4. Testing

Once a feature has been delivered, it is quickly tested. Small features are tested the same day. Larger ones might take a bit longer, as there are sometimes bugs. Once a feature passes its test and is integrated into the main applicaiton, it is declared accepted. Accepted features are eligible for deployment.

5. Deployment

Deployment is the process of pushing a completed feature to Grist’s production environment, usually the one on the wordpress.com cloud. In order to deploy a feature, we transfer it from our codebase to the codebase on wordpress.com, and wait a short time for wp.com engineers to review and deploy it. As a result of clean, tested nightly tags of the trunk, we can deploy grist.org (i.e. — all accepted features) at least once each day. The pause between us doing a deployment and it appearing on grist.org can be hours long at times. It is often shorter. Once a feature is deployed, it’s visible to the world on grist.org.

6. Iteration

Thought we were done? But no! Now comes the part where we gather data on deployed features, continue design (that never ends) and come up with new ideas about how to improve what we see happening with Grist in the real world.

In summary, you can think of the progression like this:

idea -> definition -> icebox (via product owners) -> backlog (via product owners) -> current sprint (via tech team) -> started (when a developer begins work) -> finished (when a developer finishes work) -> delivered (when it enters our codebase) -> accepted (when it is tested) -> deployed (when it appears on grist.org) -> iteration -> …

Here’s a list of definitions you may find useful:

Basecamp: a platform we use to discuss design, features and other stuff. Created by a religious organization known as 37Signals.

product owner: someone who is ultimately responsible for the product — it’s their job to make sure grist.org meets its goals and is great

feature request: a clear idea for a new feature — it’s good to talk about and refine these a lot

Pivotal Tracker: some software we use to organize our work, and track lots of features and bugs

icebox: a collection of features we may implement

backlog: a collection of features we will implement

design phase: a phase before, during and after an idea becomes a real thing. It’s where the plans, visual aspects, inner workings and other details of a feature are hashed out

designer: someone who is smart and designs stuff. Colloquially, a visual designer

developer: an awesome, incalculably attractive person who works near the middle of the Grist offices. Also, someone who writes code, designs and implements web applications

stakeholder: a person who has a business interest in the product. This interest might be “raising money”, “publishing content” or “engaging community members”

sprint: a two week period in which we commit to doing a bunch of work

stand-up: a meeting where everyone stands up (so that the meeting is short.) We use them to self-organize during sprints

finished: the state a feature is in when it requires no further programming

delivered: a finished feature that has been entered into Grist’s codebase

committed: a synonym for delivered

accepted: the state a feature is in when it has passed all tests

deployed: a feature that is live on grist.org, or about to be

repository: an iron fortress that contains the current state of our codebase, and all previous states

trunk: the version stream of the product from which we deploy

branch: side-streams of the product that we use for development purposes

iterate: to make repeated use of the development sequence defined above. Generally produces improvement (which is beyond definition, and in the eye of the beholder.)