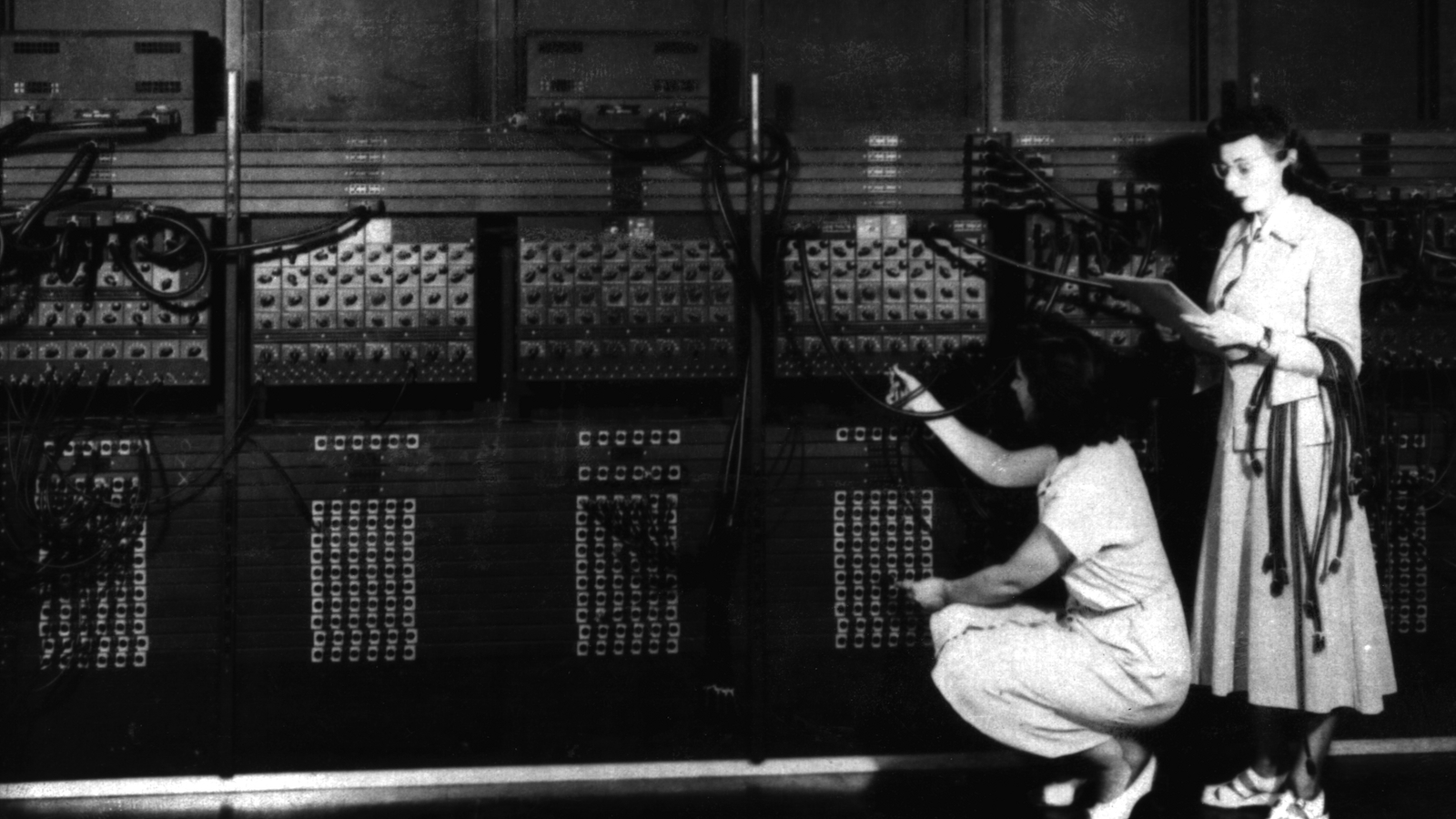

A little over a decade ago, my friend Ed took me to see ENIAC — the first programmable electronic computer. Well, it was less than 10 percent of ENIAC. It sat in a glass case in a corner of the engineering school at the University of Pennsylvania, and there were just a few parts left of what had been a 30-ton machine: large metal rectangles and a few snaking cables. We peered through the glass. It looked like the kind of thing that a very eccentric shut-in might make in a basement, which was sort of true, except that the shut-ins were engineers and the whole thing cost them about $6 million in today’s dollars to build.

“It was built with what they called ‘a baby-killer grant’,” said Ed, as we pressed our faces to the glass. “It calculated the trajectory of missile shells. All of the programmers were women.”

The only tech world that I’d known was the world of start-ups. I knew about that secondhand, both from the hype-filled articles around them and from a guy I knew who had gotten headhunted right out of my podunk Midwestern college. This guy had moved out to the Bay Area and crashed three brand new Japanese motorcycles in rapid succession. He hated his job, and liked to talk about how he had written a program to count down the hours until he could quit and achieve his true destiny, which was traveling around the world taking pictures of poor people with an expensive camera.

The more I learned about technology, though, the more I realized how much the direction that technology takes has to do with who pays the bills to develop it in the first place. Right now, the start-up culture in the Bay Area is drunk on the idea of its own innovation, but it’s using the word “disrupt” for a reason — because its bottom line depends on using software (and the impressive lobbying dollars that a software start-up can attract) to move in on the turf of pre-existing ways of doing business.

Google, Facebook, Twitter, and other social networks make a thing that people look at, and then make money (or try to) by persuading advertisers to pay them money for access to those people — in much the same way that newspapers, magazines, and billboards have always done. Other start-ups do more or less what taxis, or payday loans, or remittances do, but a little cheaper, or with lower start-up costs, or with a better user interface. For this, they attract a lot of private investment.

What that private investment is not attracted to today is solar or renewable energy. The recent drop in oil and gas prices may have killed the Keystone XL pipeline, but it also chiseled away the profit margin of solar. And even before oil and gas prices fell, solar research was a casualty of the $30 billion in subsidies that China gave to its own solar industry, which the U.S. never tried to match. The Obama administration’s first-term stimulus package pumped billions into renewable energy, but the push didn’t last long; the most famous victim of the 2010 solar crash was Solyndra, but the hurt was industry-wide. Research drifted over into areas — like software — where start-up costs were lower and investors more plentiful.

RL Miller wrote this for Grist back in 2011:

The governments of China and the United States take different approaches to foster industrial growth. The United States has a complicated system: a tax subsidy here, a loan guarantee there, a presidential visit here, a burst of publicity there, but nothing worthy of the name “industrial policy.” China seems to simply shovel cash to certain sectors and command them to perform.

But we, too, were once a proud nation that shoveled cash at certain sectors and demand them to perform. Last week, I wrote an essay arguing that the Cold War is the best model for what the technological fight against climate change should look like. (Its more contemporary reboot, the “War on Terror,” is in its own way also a useful model, but we know less about its long-term effects so far.)

We should not imagine that the Cold War approach is particularly efficient or carries any guarantees. This country spent $4 trillion on nuclear weapons that, it turned out, we didn’t actually need — and then had to figure out what to do with. As the LA Times put it:

An estimated $375 billion was spent on the bombs; $15 billion on decommissioning the bombs; $25 billion on secrecy, security and arms control; $2 trillion on delivery systems, and $1.1 trillion on command systems and air defenses. In addition, an estimated $385 billion will be spent to clean up radioactive wastes created by the arms race.

Investments of $75 billion were made in technologies and programs that were abandoned, including missiles, reactors, bombers, and communications systems. The government spent, for example, $6 billion on development of a nuclear-powered aircraft engine for bombers and $700 million on research for peaceful nuclear explosions, according to the report.

At its 1960s peak, the United States was producing 25 nuclear weapons per day and by 1967 had a stockpile of 32,500 bombs. To keep the production process stoked, the nation was operating 925 uranium mines.

Among the other costs identified in the study: $89 million in legal fees fighting lawsuits against nuclear testing and contamination, $15 million paid to Japan as compensation for fallout from the 1954 Bravo nuclear test and $172 million paid to U.S. citizens in compensation for radiation exposure.

You would be hard-pressed to come up with a worse way to spend $4 trillion. The New Deal spent $250 billion (in today’s dollars) just on public-works projects, but we got 8,000 parks, 40,000 public buildings, 72,000 schools, and 80,000 bridges out of the deal. Nuclear weapons have also stuck around, but in a less pleasant way — over half of the U.S. Department of Energy (DOE) budget still goes to nuclear security and cleanup.

What if climate change was as scary as the 1950s-era Soviet Union, or terrorists? What would that look like? Would we come up with something as ostentatious and awe-inspiring as the Apollo space program? Would we find ourselves fighting energy proxy wars in other countries — sneakily funding solar installations, sending renewable energy propaganda out over the airwaves, Voice of America style?

The spending numbers from the Cold War or Bush’s “War on Terror” are proof of one thing: that if the U.S. is sufficiently scared of a distant foe that it doesn’t understand especially well, it’s capable of throwing an eye-popping amount of money at it. We should be able to go Cold War on climate change and — in fact — we nearly have. Back in 2003, the Apollo Alliance — a coalition of labor, business, and environmental types — came up with a plan to direct $300 billion in tax dollars towards a decade of clean energy research. The project was doomed — first by the Bush administration, then by the 2009 recession, and then by a Congress that refused to pass it. But it had a broad coalition behind it — Democrats, conservatives, labor, environmentalists.

Anyway, such coalitions aren’t always doomed. In 1992, Al Gore’s High-Performance Computing Act set aside $2.9 billion ($4.92 billion today) to fund the internet we know today. Also beginning in 1992, the Clinton administration took $30 billion out of the Pentagon’s research budget and applied that to research into commercial applications of robotics, biotechnology, national computer networks, digital imaging, and data storage. This accelerated a process of “technology transfer” from the defense-industrial complex to civilian corporate products that was already in place. IBM’s first commercial electronic computer, the 701, was originally called a “defense calculator” — a name chosen to appeal to the aerospace and defense programs that were the only organizations with the money and desire to buy one.

According to IBM legend, the impetus for developing the 701 in the first place was the outbreak of the Korean War in 1950. IBM’s chair asked the federal government if there was anything that it would like IBM to build, and the government said, “Hey, we’d like to have a bunch of machines that we can use to figure out the math involved in building aircraft and designing jet engines.” After sending the company’s director of product planning and market analysis to interview people at defense and aircraft firms, IBM did just that. But within a decade of the introduction of the 701 in 1953, IBM had turned the defense calculator into a tool for business.

I wondered, when I was younger, why so many video games were about shooting, driving, flying, and collaborating as a team to shoot even more things. Sure, kids have been playing cops-and-robbers forever, and privately I thought of myself as a better-than-average sniper. But those games didn’t seem to me like something that people would dream up if they sat down and asked, “What would be really, really fun for a kid to play?”

The Defense Department has funded video games for use in training since their earliest days. One of the first video games — Spacewars (1962) — was funded by the Pentagon. When the Cold War (and Cold War-level funding) came to an end, virtual reality battlefields also became cost-saving measures, because they were cheaper than carrying out actual field exercises. The Department of Defense generously funded virtual reality military training games. As those games got even more networked and complex, so did their commercial video game analogues. At times, the distinction between the two blurred, as in the case of America’s Army, a video game that the army developed and gave away for free, as a recruitment tool for the non-virtual military.

We still see signs of military funding working its way through the sciences. There’s the boom in research into traumatic brain injury (what’s been called “the signature injury” of the war in Iraq and Afghanistan), the use of video games to treat PTSD, and drones, drones, drones. I see it in the career of my friend Ed — like our Bay Area programmer friend, he graduated, found a job, and then that job shaped the person that he ultimately became. In his case, he wound up working on medical technology for dispensing medicine or anesthesia that was partly funded by the military (which wanted it to use in battlefield situations) but also had civilian applications (where it could bolster the work of doctors or medics in remote or less-than-ideal circumstances).

No one thing is enough to save the world these days, no matter what movies about hobbits or boy wizards may tell you. What is true is that some of America’s biggest windfalls — global-economy wise — have come from the civilian versions of technologies developed by the military for strange, scary, endless-seeming wars. But while the initial buyer determines the shape of a technology, its subsequent history is remarkably unpredictable. We never know what uses “the street” will find for the things the military industrial complex invents.

Last week, I took a wrong turn in the woods. Daylight savings time had just ended, and I had mis-timed the new nightfall — then it started to rain. I tried to retrace my steps. I swore a little. And then I thought to take out my phone. Lo and behold, when I opened Maps, there was a blue dot, representing myself, not far from the footpath that I had been looking for. I was able to use my phone to aim the blue dot that was my self in the direction of the nearest footpath.

I could do this not because this was ever something I wanted a phone to do for me. In fact, I think it’s kind of creepy (though that doesn’t stop me from using it). Still, I can do it because the Department of Defense was in full-on Cold War panic in the 1960s and willing to throw any amount of money at the problem of developing an ultra-precise way to orient nuclear missiles. And that keeps me wondering: If America could get it together enough to throw similar resources at climate change, what else might we come up with along the way?